Data centers connect thousands of network interfaces in a relatively small space — servers, switches, storage systems and management infrastructure all cabled together in high-density rack rows that must remain organized, serviceable and expandable for years without major disruption. Unlike an office environment where a single floor might have 200 cable runs, a medium-density data center room can have ten times that density in a fraction of the footprint.

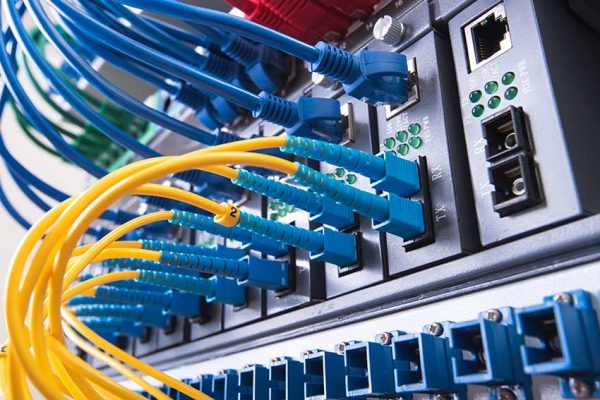

The two core cable systems in a commercial data center are fiber optic cabling and Cat6A copper. Fiber (typically OM4 multimode for intra-facility backbone, OS2 single-mode for longer distances) carries the high-capacity backbone traffic between core, aggregation and access layer switches. Cat6A copper connects individual servers to the top-of-rack switches within each cabinet row — handling the 10GBase-T server connections that form the majority of physical port count in most deployments.

Every cabling decision in a data center — fiber type, run routing, cable length, management hardware, labeling convention — has downstream consequences for airflow, cooling efficiency, troubleshooting time, and the ability to add capacity without disrupting active infrastructure. Planning these decisions before pulling a single cable is what separates organized data center infrastructure from the tangled messes that create operational risk.